What’s Driving the Push to the Edge

The traditional cloud model wasn’t built for the real time world we’re living in. It made sense when most data moved in predictable patterns from a device, through the internet, into a data center often hundreds or thousands of miles away. But that model hits a wall when you’re dealing with self driving cars, instant health monitoring, or factories running on autonomous systems. Timing isn’t a luxury in those worlds. A few milliseconds matter.

By 2026, we’re deep in the era of hyperconnectivity. We’re talking tens of billions of connected devices from smartwatches to smart streetlights all generating constant streams of data. Each one expecting fast, local responses. That explosive growth is putting pressure on systems designed for slower, less demanding traffic. You can’t wait for a signal to bounce to a distant server and back when the decision being made is whether to stop a car or adjust a medical dose.

Centralized data centers just can’t keep up. Distance introduces latency, no matter how fast your connection is. Piling more traffic into a few mega servers leads to bottlenecks, lag, and sometimes, dangerous delays. That’s why edge computing is stepping in not as a replacement for cloud, but as its sharper, faster counterpart. One built for the pace and expectations of today’s connected reality.

Edge Computing: What It Actually Means

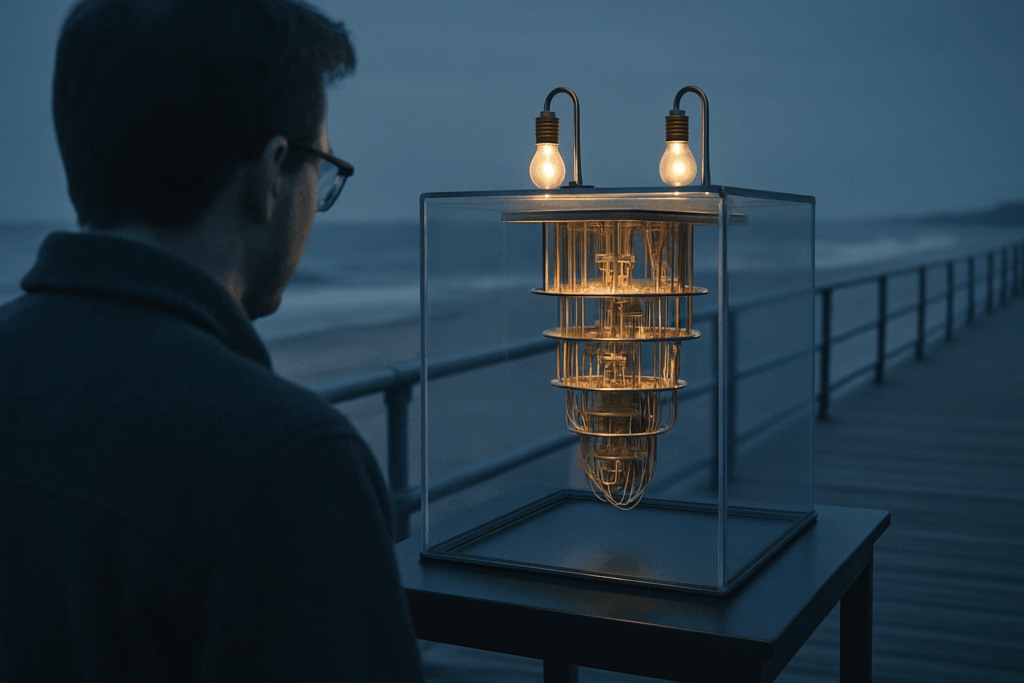

Think of edge computing as a local pit stop for your data. Instead of sending everything to a far off cloud server to get processed, edge computing handles it closer to where the data is created on the edge of the network. That might be a sensor in a factory, a security camera on a street, or a wearable device on your wrist.

Here’s where it stands apart:

Cloud computing sends data to centralized data centers, often far away.

Fog computing sits somewhere in the middle regional nodes that process data a bit closer than cloud servers.

Edge computing? It’s right there at the source. It processes data on or near the device itself.

The benefits are solid: lower latency (data doesn’t have to travel far), faster processing (things happen in near real time), and better privacy (less data goes out into the wild). For industries running real time applications like autonomous vehicles, remote surgeries, or city wide IoT systems it’s not a bonus, it’s the bare minimum.

As we stack more expectations on our devices to think, respond, and adapt without delay edge computing becomes less of a tech buzzword and more of a necessity. It’s physical, immediate, and built for the speed the future demands.

Key Applications in IoT

Edge computing isn’t just a technical upgrade it’s front and center across real world industries.

Take smart cities. Traffic lights now adapt to real time road data, cutting wait times and emissions. Pollution sensors monitor air quality block by block, pushing alerts when thresholds spike. And city cameras aren’t just storing footage; they’re analyzing it on the spot to identify congestion, accidents, or even suspicious activity.

In industrial IoT, the edge brings serious value to factory floors. Machines equipped with edge sensors don’t just report breakdowns they predict them. That means less downtime, faster fixes, and tighter production schedules. Data doesn’t need to travel to a far off server to be useful. It works where it’s generated.

Healthcare is seeing a shift too. Wearables monitor vitals like heart rate or oxygen levels in real time. The data stays local unless there’s a signal that something’s off. No lag, no delay just instant alerts. For patients managing chronic conditions, this could mean real time intervention and peace of mind.

And at home? Smart devices no longer wait for the cloud to tell them what to do. Whether it’s your thermostat reacting to your habits or your fridge flagging low inventory, edge powered homes adapt faster and operate smoother. These systems respond in milliseconds, not seconds, which makes automation feel… natural.

In short, edge computing is trimming the fat and sharpening the response time right where we need it most.

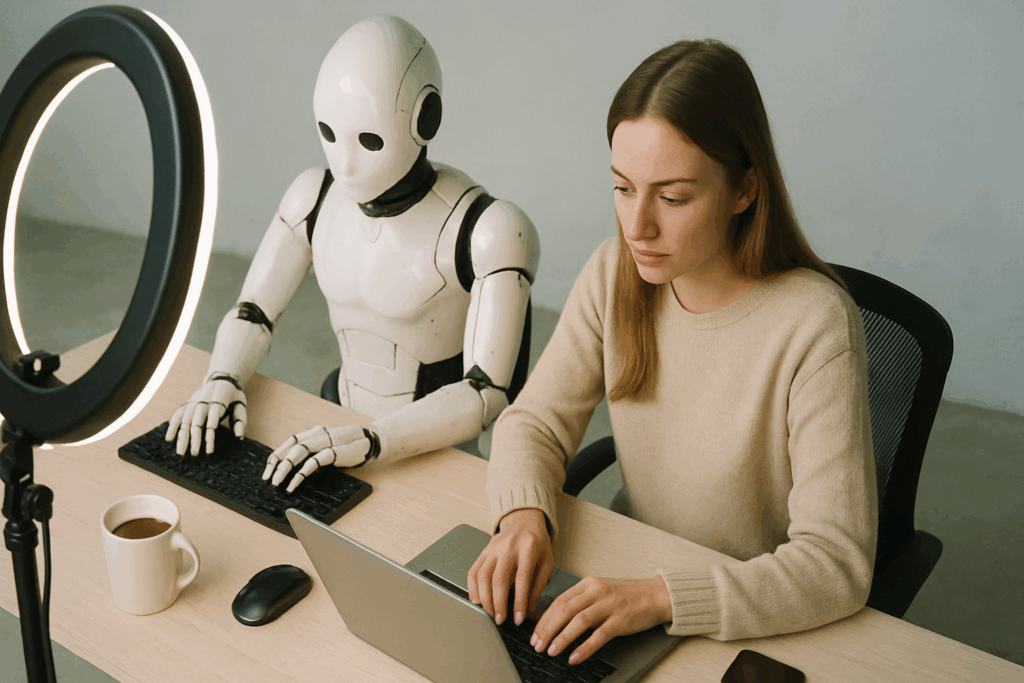

Why Edge is Essential for Generative AI at the Device Level

Generative AI is no longer confined to data centers and high powered servers. Thanks to edge computing, models are being deployed directly onto devices phones, cameras, sensors cutting down latency and server strain in real time tasks. This shift isn’t just about speed. It’s about creating smarter, more responsive experiences.

On device model inference means the AI does its thinking locally. No more waiting for a round trip to the cloud. Whether it’s a voice assistant offering custom replies or a fitness tracker tailoring recommendations, decisions happen in milliseconds, without relying on busy central servers.

This setup also conserves bandwidth. Instead of offloading massive data packets, devices handle more in house, sending less up the line. That lowers operational costs and improves privacy, too it’s a lot harder for sensitive data to leak when it never leaves your phone in the first place.

For a deeper look into how this tech is being used, check out Top Use Cases of Generative AI Technology in 2026.

Challenges that Still Exist

As edge computing grows in importance, it also brings along a set of complex challenges that organizations must navigate. With data processing moving closer to the source, ensuring security, scalability, and data consistency becomes more difficult but no less vital.

Growing Security Risks at the Edge

One of the most pressing concerns with edge computing is security. Every new endpoint whether it’s a smart camera, wearable device, or factory sensor becomes a potential target.

Expanded attack surface: The more edge devices in play, the more opportunities for breaches.

Limited local protections: Many edge nodes lack the robust security measures found in centralized systems.

Real time vulnerabilities: Because edge computing thrives on real time response, letting guardrails lag behind can leave systems exposed.

The Real Cost of Infrastructure

While edge computing is seen as a faster, more decentralized alternative to traditional models, it comes at a price especially when scaling across an enterprise.

Hardware investment: Building and maintaining edge nodes can be more expensive than relying solely on cloud servers.

Energy and maintenance demands: Edge devices require regular updates and physical upkeep.

Harder to centralize resources: Distributing hardware means added logistical complexity and cost.

Data Consistency Challenges

Keeping data consistent across distributed systems is another major hurdle.

Siloed data: Edge devices may operate offline or semi connected, leading to data fragmentation.

Latency in synchronization: Updates between edge and cloud components may not happen in real time, potentially causing version conflicts.

Governance struggles: Ensuring compliance and data integrity across dynamic edge environments poses regulatory and ethical challenges.

Edge computing offers compelling speed and responsiveness, but overcoming these structural and operational obstacles is essential for sustainable deployment.

Where It’s Headed

As we move deeper into the era of ultra connectivity, edge computing is becoming more than just a niche solution it’s setting the stage for the next generation of intelligent systems. Here’s where edge computing is headed and how it’s poised to transform industries.

The Power Duo: Edge AI Chips + 5G/6G Networks

The combination of powerful edge AI chips and real time, high bandwidth networks like 5G and emerging 6G uses will significantly amplify the capabilities of connected devices.

Faster processing at the source: AI enabled chips let devices make decisions locally, minimizing reliance on centralized infrastructure.

Real time responsiveness: Coupled with ultra low latency 5G/6G, actions can be taken in milliseconds ideal for sectors like autonomous driving and remote robotics.

Scalable intelligence: As chip capabilities improve, even low power devices will be able to handle advanced AI inference at the edge.

Logistics and Energy Sectors See a Surge

Enterprises are quickly adopting edge tech to streamline operations and reduce bottlenecks:

Supply chain optimization: Smart logistics use edge sensors and processors for route optimization, asset tracking, and inventory control in real time.

Energy grid management: Edge nodes collect, process, and respond to energy usage patterns locally, reducing outages and improving sustainability.

Developers Shift Toward Edge Native Design

As edge becomes the norm, software approaches are adapting:

Edge first development: Coding environments, tools, and frameworks now allow developers to build, test, and deploy directly to edge environments.

Microservices architecture: Lightweight, modular apps meant for edge deployment reduce system strain and are highly scalable.

Cross platform compatibility: With fragmented hardware across industries, developers are emphasizing portability and runtime efficiency.

A Leaner, Smarter Future

Long term, the ubiquity of edge computing will redefine how humans interact with technology:

Devices become more autonomous: From household appliances to industrial controllers, the shift to edge means smarter, faster responses everywhere.

Total system decentralization: With intelligence distributed and localized, there’s less need for bulky infrastructure or constant cloud connectivity.

Human centered design: Shorter response times and context aware performance elevate the user experience across all sectors.

Edge computing is no longer an emerging trend it’s a foundational shift. As hardware, networks, and development practices mature, we’ll see edge become the architecture powering a more efficient, responsive, and intelligent world.